How systems work

METASKILLS Chapter 20

A system is a set of interconnected elements organized to achieve a purpose. For example, the plumbing in your house is a system organized to deliver clean water and flush waste water away. A business is a system organized to turn materials and labor into profit-making products and services. A government is a system that’s organized to protect and promote the welfare of its citizens. When you think about it, even a product like a movie is a system, designed to create a theatrical experience for an audience.

A system can contain many subsystems, and it can also be part of a larger system. How you define the system depends on where you draw its boundary. And where you draw its boundary depends on what you want to understand or manipulate. If you’re the director of a movie, for example, you’ll want to draw a boundary around the whole project so that you can manage the relationships among the story elements, the locations, the sets, the performances, the technical production, the schedule, the costs, and so on. You might also draw boundaries around subsystems like individual scenes, stunts, and camera moves, so you can control the various relationships within each of those, as well as their relationships to the whole.

Complex systems are always part mechanics, part mystery.

The structure of a system includes three types of components: elements, interconnections, and a purpose. It also includes a unique set of rules—an internal logic—that allows the system to achieve its purpose. The system itself, along with its rules, determines its behavior. That’s why organizations don’t change just because people change. The system itself determines, to a large extent, how the people inside it behave. It’s useless to blame the collapse of the banking industry on individual executives or specific events. The very structure of the banking system is to blame, since it’s tilted in favor of corrupt actors and selfish behaviors. Therefore we might think about redesigning the system so corruption is not so easy or profitable. Or we might make improvements to the larger system in which it operates, say capitalism itself. We might even question the cultural norms and beliefs that gave rise to 20th-century capitalism in the first place.

To improve a complex system, you first have to understand it—at least a little. I say “a little” because complex systems are always part mechanics, part mystery. Take the example of a company. Like all systems, a company has inflows and outflows, plus feedback systems for monitoring their movements. A CEO might have a good grasp of the system’s elements (its divisions, product lines, departments, competencies, key people), its interconnections(communications, distribution channels, partnerships, customer relationships), and its purpose (mission, vision, goals). She might also understand the system’s operational rules (processes, methodologies, cultural norms). But it’s still difficult for any one person to know how the company is actually doing in real time. So she uses the system’s feedback mechanisms (revenues, earnings, customer research) to get a read on the situation.

But there’s a catch.

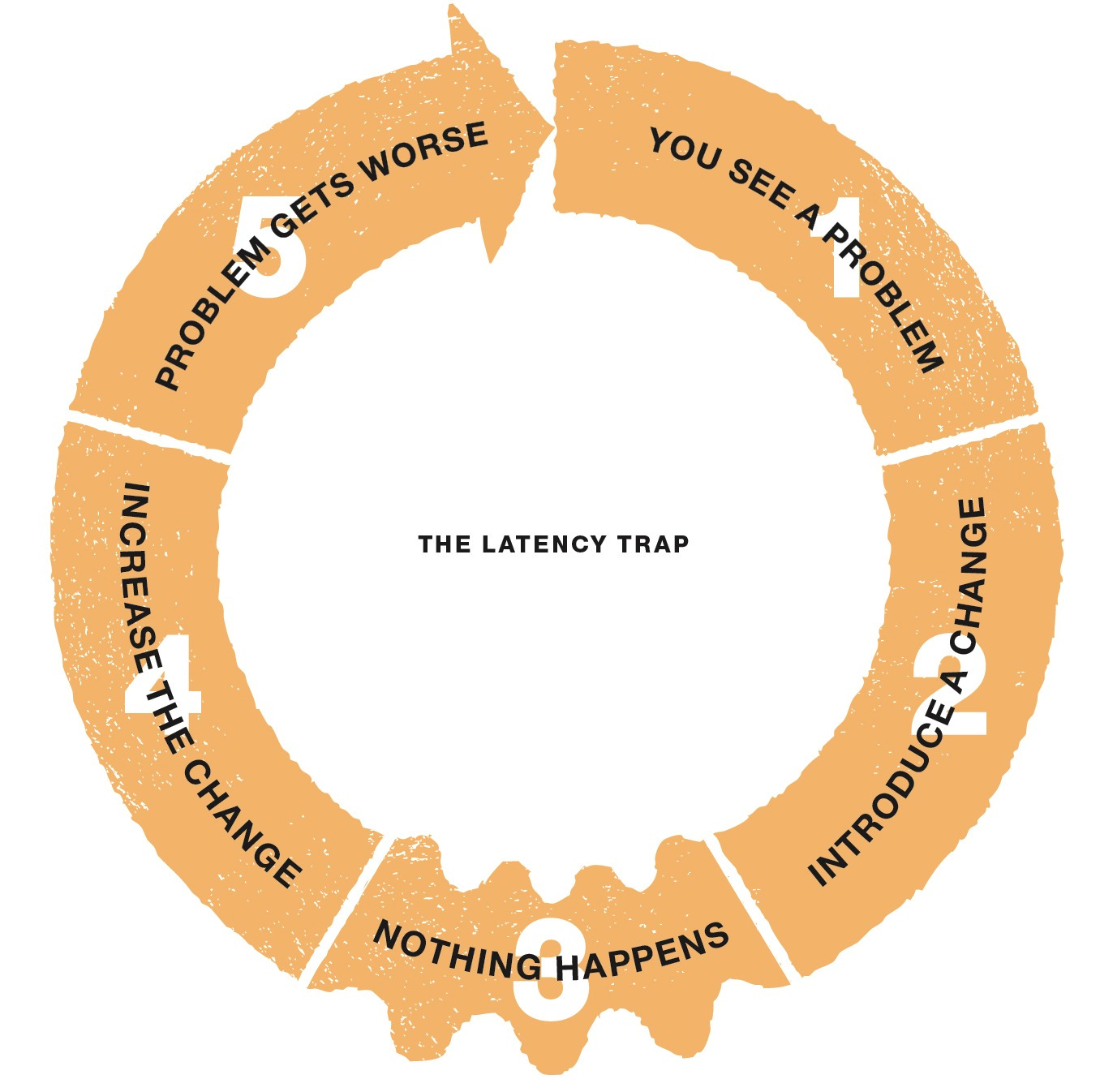

The feedback mechanisms in most systems are subject to something called latency, a delay between cause and effect, or between cause and feedback, in which crucial information arrives too late to act upon. While the revenue reports are up to date in a traditional sense, they only show the results of last quarter’s efforts or last year’s strategy. They’re lagging indicators, not leading indicators, of the company’s actual progress. By the time she gets the numbers, the situation is a fait accompli. Any straightforward response based on the late information is likely to be inadequate, ineffective, or wrong.

How can she work around this problem? By looking at the company as a system instead of individual parts or separate events. She can anticipate the eventual feedback by finding out which indicators are worth watching.

For example, she might watch levels of brand loyalty as an indicator of future profit margins. Or products in the pipeline as an indicator of higher revenues. Or trends in the marketplace as an indicator of increasing demand. Of course, leading indicators can be tricky, since they’re only predictions. But if she keeps at it, continuously comparing predictions with outcomes, she can start to gain confidence in the indicators that matter. This is known as “getting a feel” for the business.

In systems theory, any change that reinforces an original change is called reinforcing or positive feedback. Any change that dampens the original change is called balancing or negative feedback.

For example, if a company’s product starts to take off, other customers may notice and jump on the bandwagon. This is an example of reinforcing feedback. If the product keeps selling, it may eventually lose steam as it runs out of customers, meets with increasing competition, falls behind in its technology, or simply becomes unfashionable. These are examples of balancing feedback. By keeping an eye on these two feedback loops, the CEO can get ahead of the curve and make decisions that mitigate or reverse the situation.

But let’s get back to the problem of latency. Every change to a system takes a little time to show up. This is best illustrated by the classic story of the “unfamiliar shower.” Imagine you’re staying at the house of some friends, and you go to use their shower for the first time. It’s a chilly morning. You turn on the taps, step in, and suddenly jump back. Whoa! The water comes out like stabbing needles of ice! So you gingerly reach in again and turn the hot up and the cold down. No change. Okay, one more adjustment and—ahhh—the water warms up enough to stand under the shower head. Then, just as suddenly, the reverse occurs. The water turns violently hot and this time you jump all the way out. “Yowww!” you scream, waking up the house.

What just happened? In systems-thinking terms, the delay between cause and effect—between adjusting the taps and achieving the right temperature—deprived you of the information you needed to make appropriate changes to the hot and cold water. After a few times using the shower (assuming you were allowed to stay), you learned to wait for the feedback before fiddling with the taps. And you later learned where the taps should end up to achieve the right temperature.

Here are some other examples of system delays:

The state government raises taxes on business. The immediate result is more revenue for public works, but over time businesses pull out and fewer move in, thereby lowering the revenue available for public works.

A mother is concerned that her children may be exposed to danger if they’re allowed to roam the neighborhood freely, so she keeps them close and controls their interactions with friends. At first this keeps them safe, but as they grow older they suffer from impaired judgment in their interactions with the broader world.

A company is hit by an industry downturn and its profits begin to sag. It reacts quickly by laying off a number of highly paid senior employees. While this solves the immediate problem, the talent-starved company falls behind its competitors just as the economy picks up.

A student feels that his education is taking too long, so he drops out of college and joins the workplace. He makes good money while his college friends struggle to pay their bills. Over time, his lack of formal education puts a cap on his income while his friends continue up the ladder.

A salesman meets his quota by pressuring customers to buy products that aren’t quite right for their needs. The next time he tries to make a sale, he finds them less agreeable.

A bigger child learns that she can bully the other children in school. At first this feels empowering, but over time she finds she’s excluded from friendships with other children she admires.

The common thread in all these stories is that an immediate solution caused an eventual problem. None of the protagonists could see around the corner because they were thinking in short, straight lines.

Our emotional brains are hardwired to overvalue the short term and undervalue the long term. When there’s no short-term threat, there’s no change to our body chemistry to trigger fight or flight. If you pulled back your bedsheet one night and found a big, black spider, your brain would light up like a Christmas tree. But if you were told that the world’s population will be decimated by rising ocean waters before the year 2030, your brain would barely react. We’re genetically attuned to nearby dangers and unimpressed by distant dangers, even when we know that the distant ones are far more dangerous. For example, I know I should earthquake-proof my house in fault-riddled California, but today I have to oil the squeaky hinge on my bedroom door. It’s driving me crazy.

Our emotional brains are hardwired to overvalue the short term and undervalue the long term.

Latency is the mother of all systems traps. It plays to our natural weaknesses, since our emotional brain is so much more developed than our newer rational brain. It takes much more effort to think our way through problems than to merely react to them. We have to exert ourselves to override our automatic responses when we realize they’re not optimal.

In this way, systems thinking isn’t only about seeing the big picture. It’s about seeing the long picture. It’s more like a movie than a snapshot. My wife can predict the ending of a movie with uncanny accuracy after viewing the first few minutes. How? Through a rich understanding of plot patterns and symbolism, acquired over years of watching films and reading fiction, that lets her imagine the best resolution. In other words, she understands the system of storytelling.

But there’s more to systems than watching for patterns, loops, and latency. You also have to keep an eye on archetypes.

Brilliant summary Marty. Love your insights and style of writing - it just keeps me reading. Thanks and keep them coming.

Love the 'taps' illustration.First, it happened to me in a B&B in Scotland, but the innkeeper played a purposeful role in the latency. She was tired of Yanks driving up her fuel bill so if you demanded really hot water the system had an internal 'rev limiter' that defaulted to somewhere between tepid and freezing. If you demanded only warm water from the tap, everything was peachy. Second, as a member of enterprise scale management teams I often thought our companies were like giant steamships that take miles and miles to turn. The problem was always that by the time you finished a turn, another one was always immediately required to respond to a changing market - the company leaves a series of 's' shapes in the wake behind. Eventually, everyone just gets seasick.